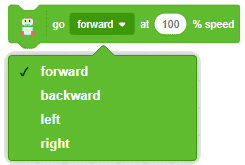

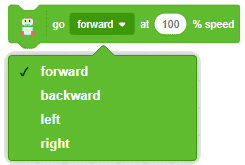

The block moves the Quarky robot in the specified direction. The direction can be “FORWARD”, “BACKWARD”, “LEFT”, and “RIGHT”.

- Forward:

- Backward:

- Left:

- Right:

The block moves the Quarky robot in the specified direction. The direction can be “FORWARD”, “BACKWARD”, “LEFT”, and “RIGHT”.

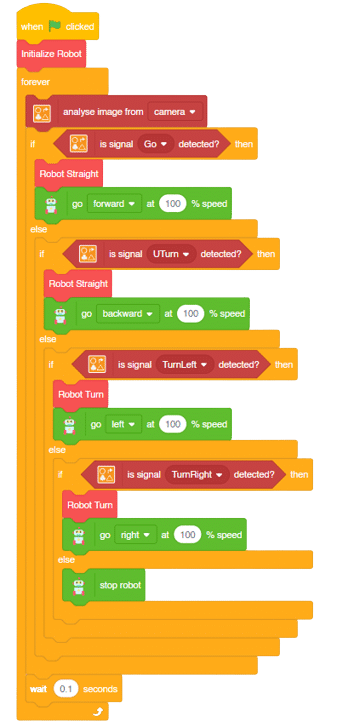

A sign detector Mars Rover robot is a robot that can recognize and interpret certain signs or signals, such as hand gestures or verbal commands, given by a human. The robot uses sensors, cameras, and machine learning algorithms to detect and understand the sign, and then performs a corresponding action based on the signal detected.

These robots are often used in manufacturing, healthcare, and customer service industries to assist with tasks that require human-like interaction and decision making.

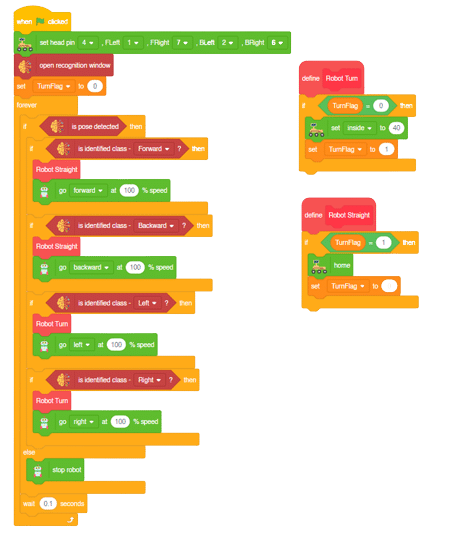

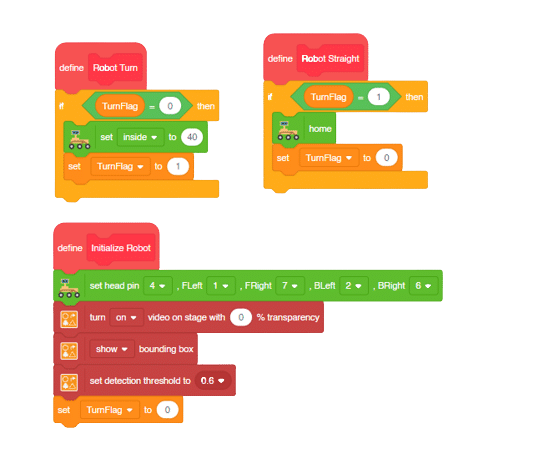

Initializing the Functions:

Main Code

Forward-Backward Motions:

Right-Left Motions:

In this activity, we will control the Mars Rover according to our needs using the Dabble application on our own Devices.

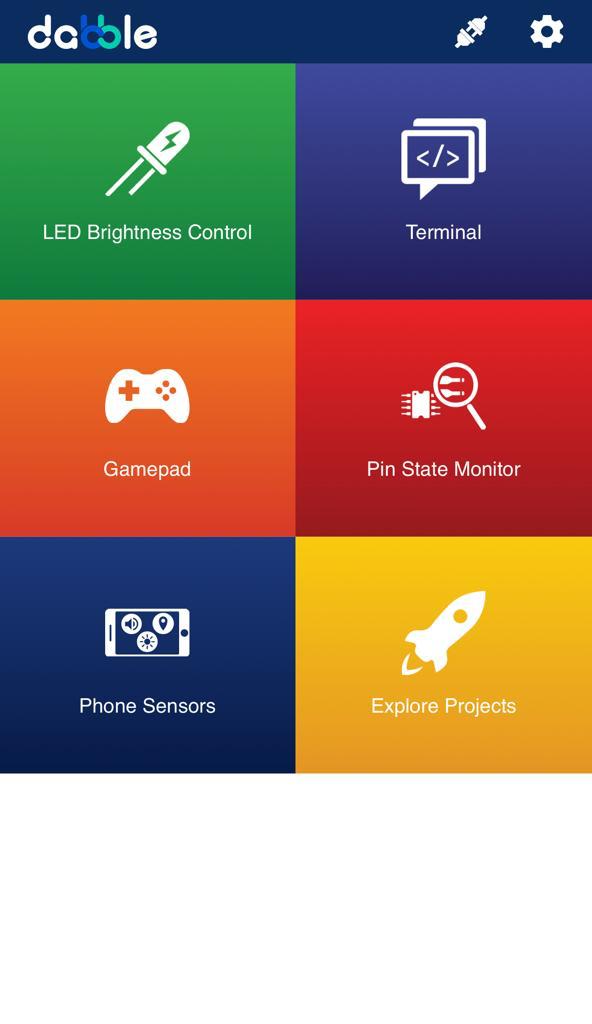

We will first understand how to operate Dabble and how to modify our code according to the requirements. The following image is the front page of the Dabble Application.

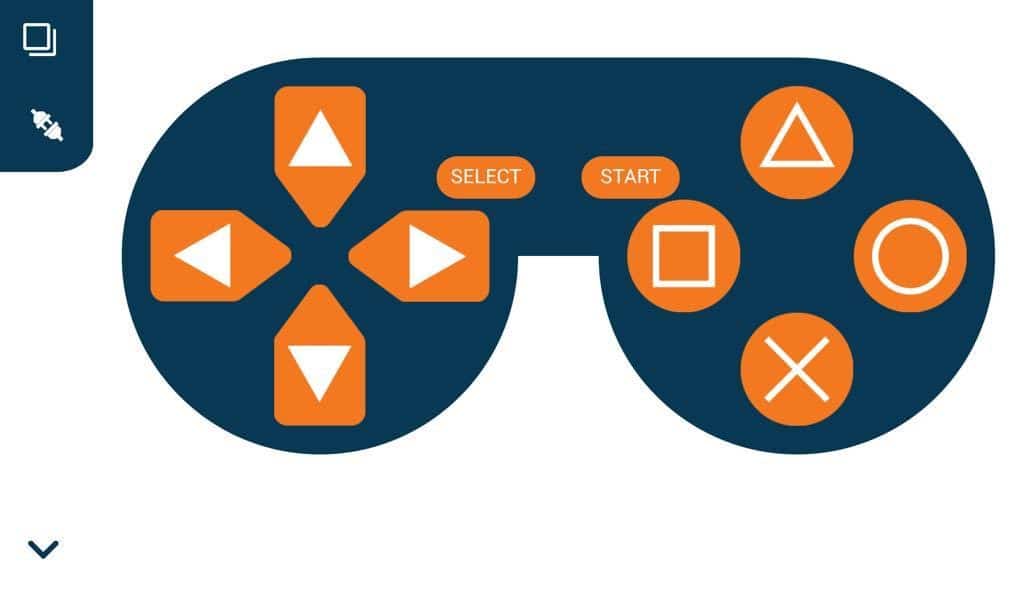

Select the Gamepad option from the Home Screen and we will then use the same gamepad to control our Mars Rover.

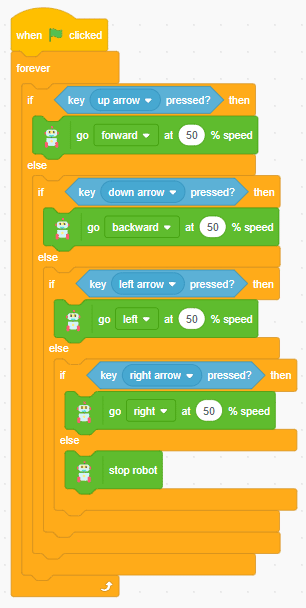

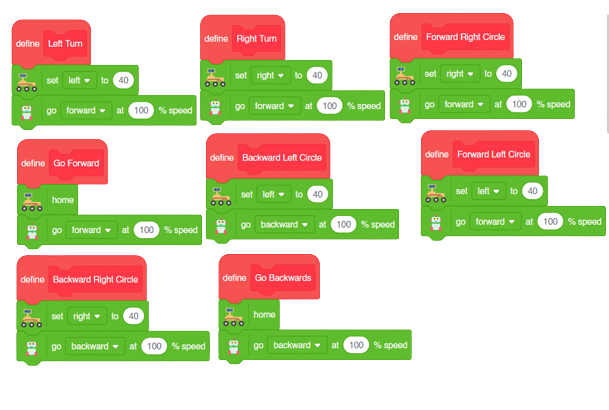

The following blocks represent the different functions that are created to control the Mars Rover for different types of motions. We will use the arrow buttons to control the basic movements.( Forward, Backward, Left, Right )

We will create our custom functions for specialized Circular motions of Mars Rover. We will use the Cross, Square, Circle, and Triangle buttons to control the Circular motions of Mars Rover.

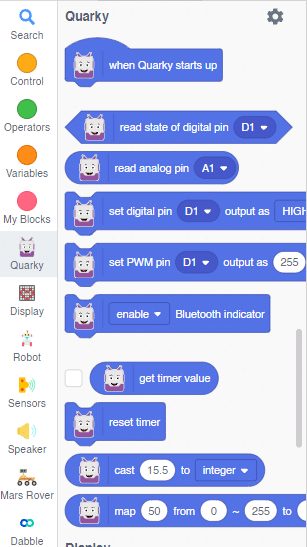

Note: You will have to add the extensions of Mars Rover and also of Dabble to access the blocks.

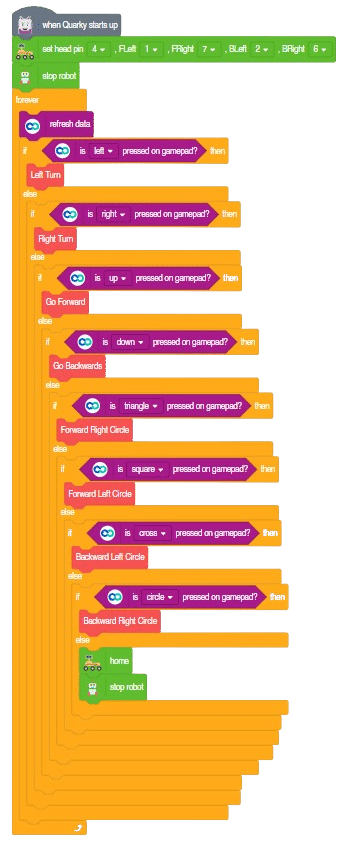

The main code will be quite simple consisting of nested if-else loops to determine the action when a specific button is pressed on the Dabble Application.

You will have to connect the Quarky with the Dabble Application on your device. Make sure Bluetooth is enabled on the device before connecting. Connect the Rover to the Dabble application after uploading the code. You will be able to connect by clicking on the plug option in the Dabble Application as seen below. Select that plug option and you will find your Quarky device. Connect by clicking on the respective Quarky.

Forward-Backward Motions:

Right-Left Motions:

Circular Left Motion:

Circular Right Motion:

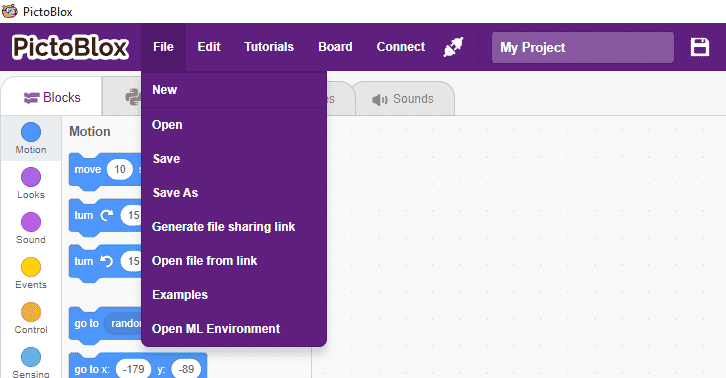

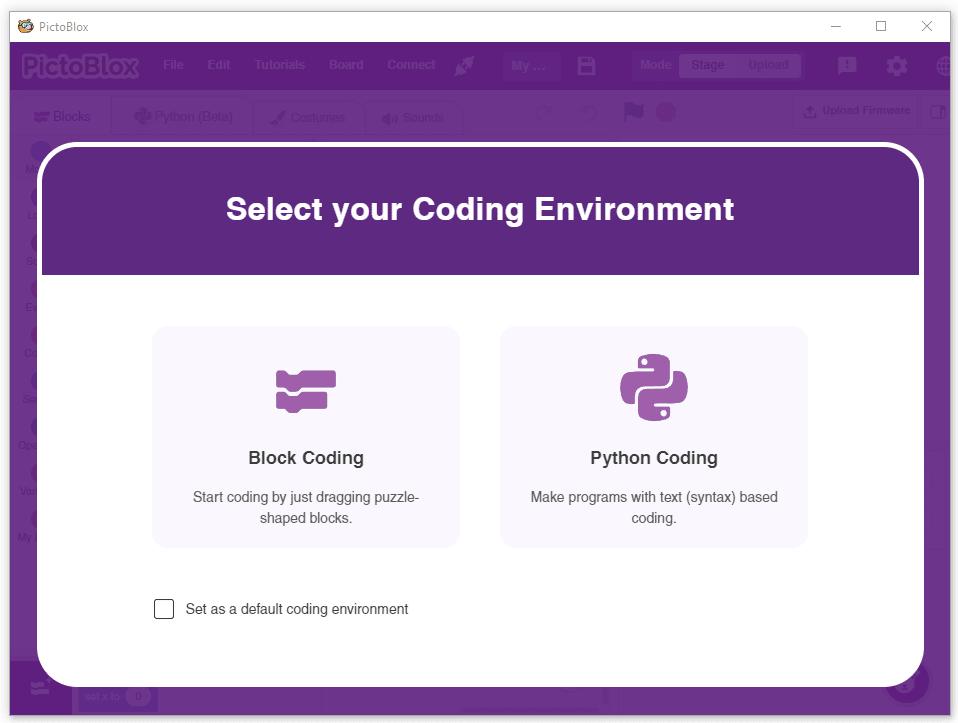

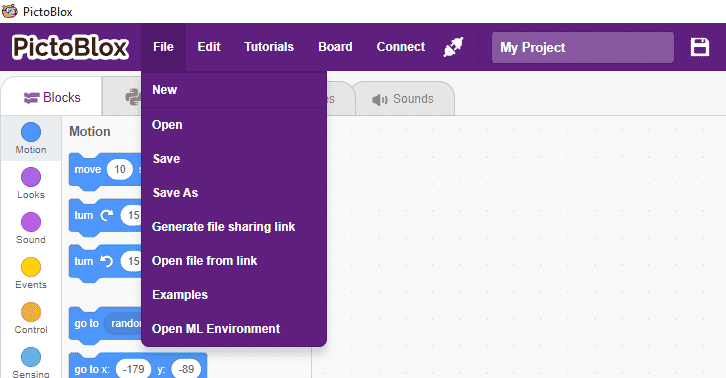

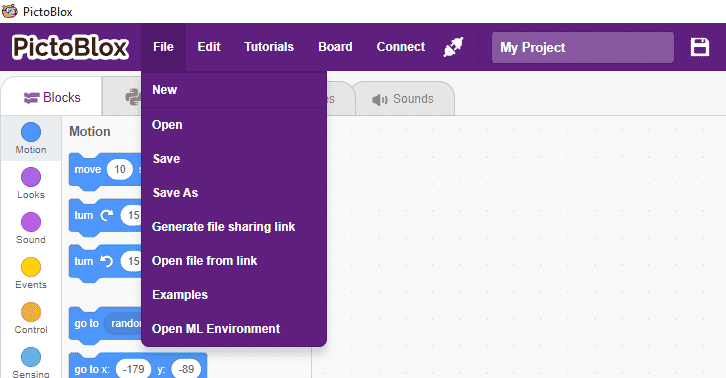

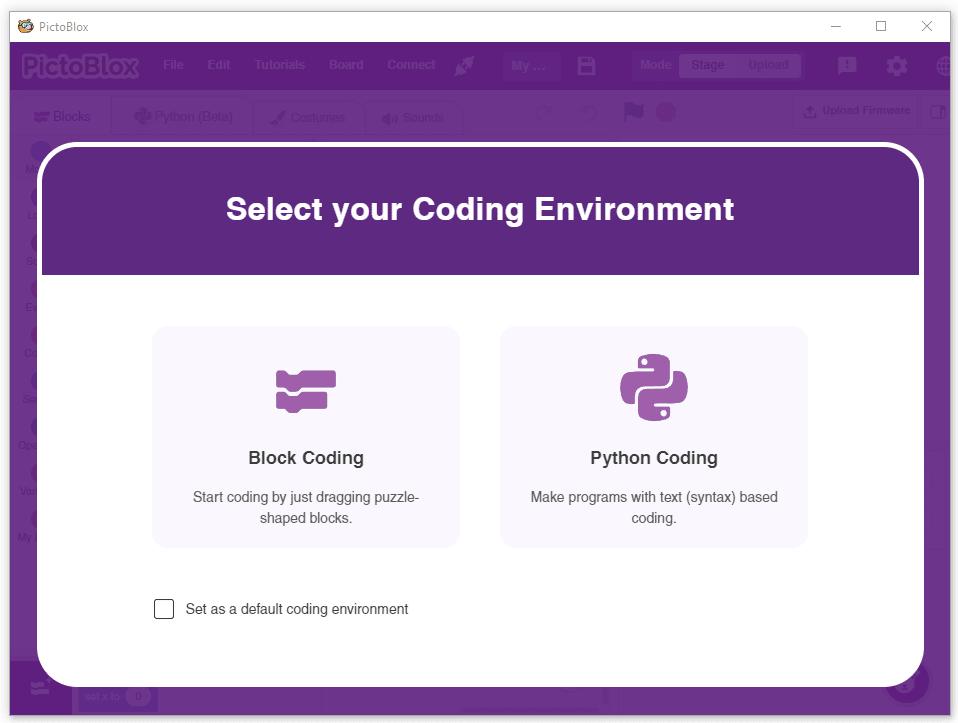

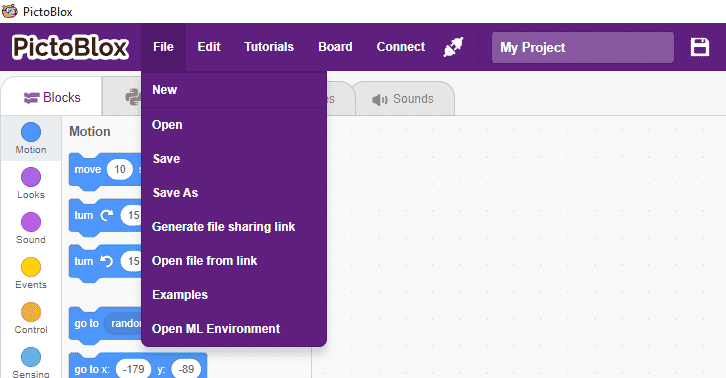

In this activity, we will use the Machine Learning Environment of the Pictoblox Software. We will use the Audio Classifier of the Machine Learning Environment and create our custom sounds to control the Mars Rover.

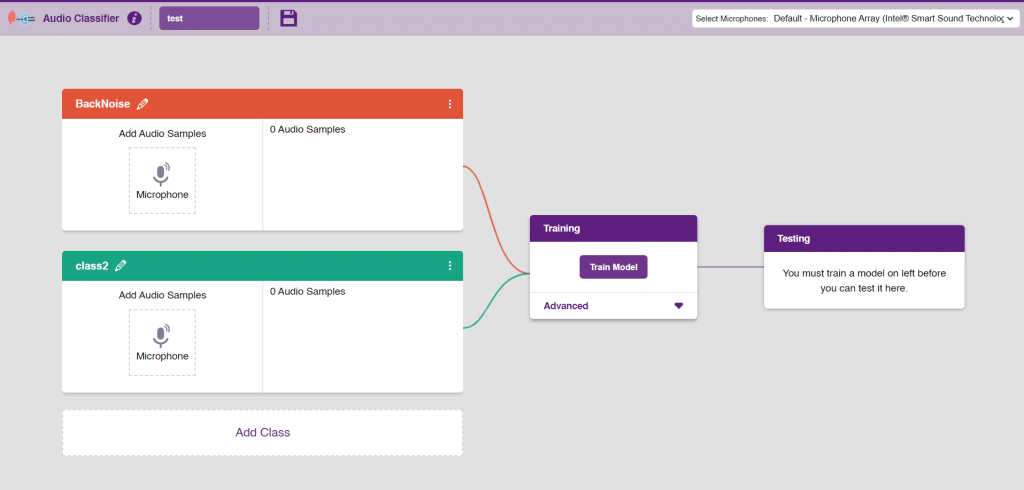

Follow the steps below to create your own Audio Classifier Model:

Note: You can add more classes to the projects using the Add Class button.

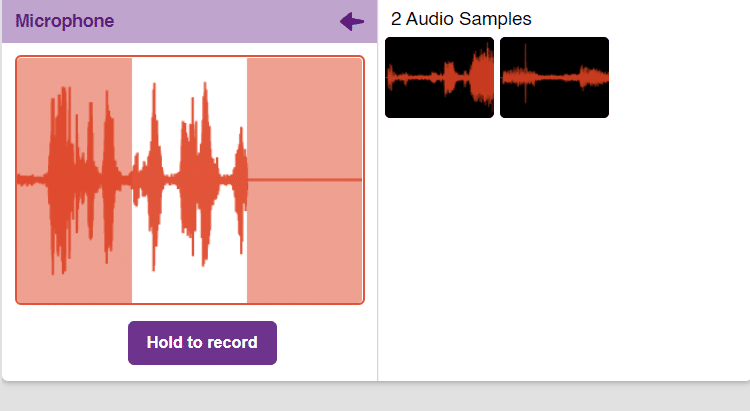

You can perform the following operations to manipulate the data into a class.

Note: You will only be able to change the class name in the starting before adding any audio samples. You will not be able to change the class name after adding the audio samples in the respective class.

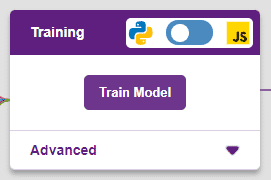

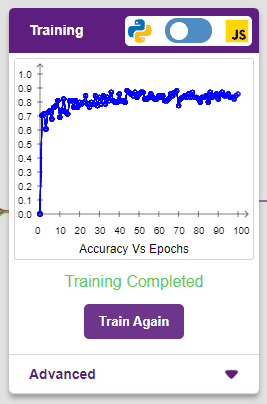

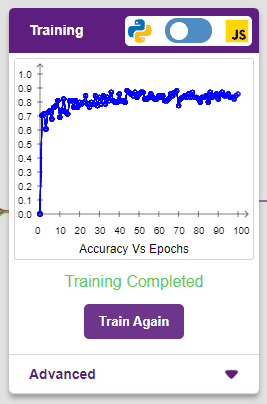

After data is added, it’s fit to be used in model training. In order to do this, we have to train the model. By training the model, we extract meaningful information from the hand pose, and that in turn updates the weights. Once these weights are saved, we can use our model to make predictions on data previously unseen.

The accuracy of the model should increase over time. The x-axis of the graph shows the epochs, and the y-axis represents the accuracy at the corresponding epoch. Remember, the higher the reading in the accuracy graph, the better the model. The range of the accuracy is 0 to 1.

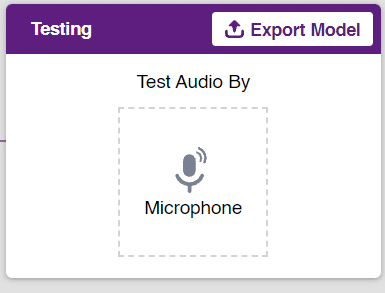

To test the model simply, use the microphone directly and check the classes as shown in the below image:

You will be able to test the difference in audio samples recorded from the microphone as shown below:

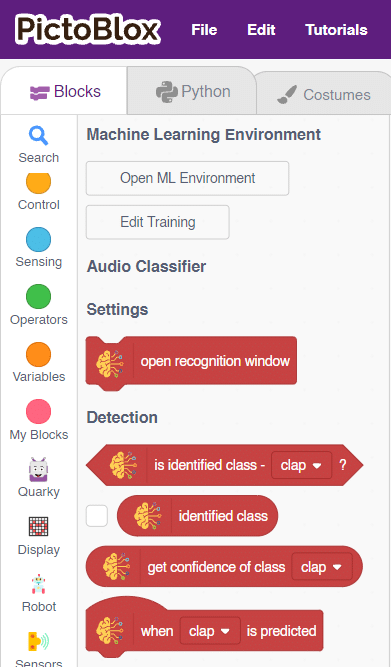

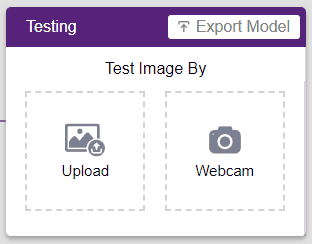

Click on the “Export Model” button on the top right of the Testing box, and PictoBlox will load your model into the Block Coding Environment if you have opened the ML Environment in the Block Coding.

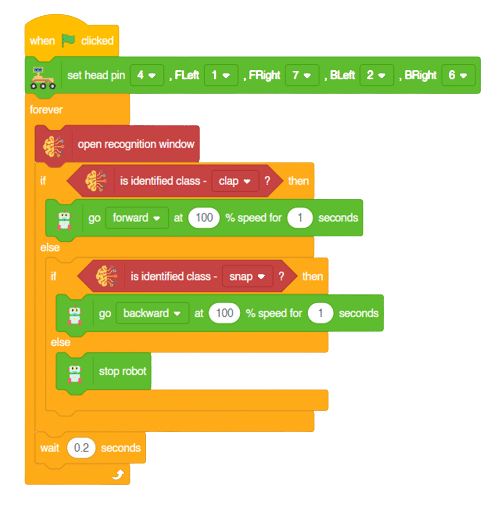

The Mars Rover will move according to the following logic:

Note: You can add even more classes with different types of differentiating sounds to customize your control. This is just a small example from which you can build your own Sound Based Controlled Mars Rover in a very easy stepwise procedure.

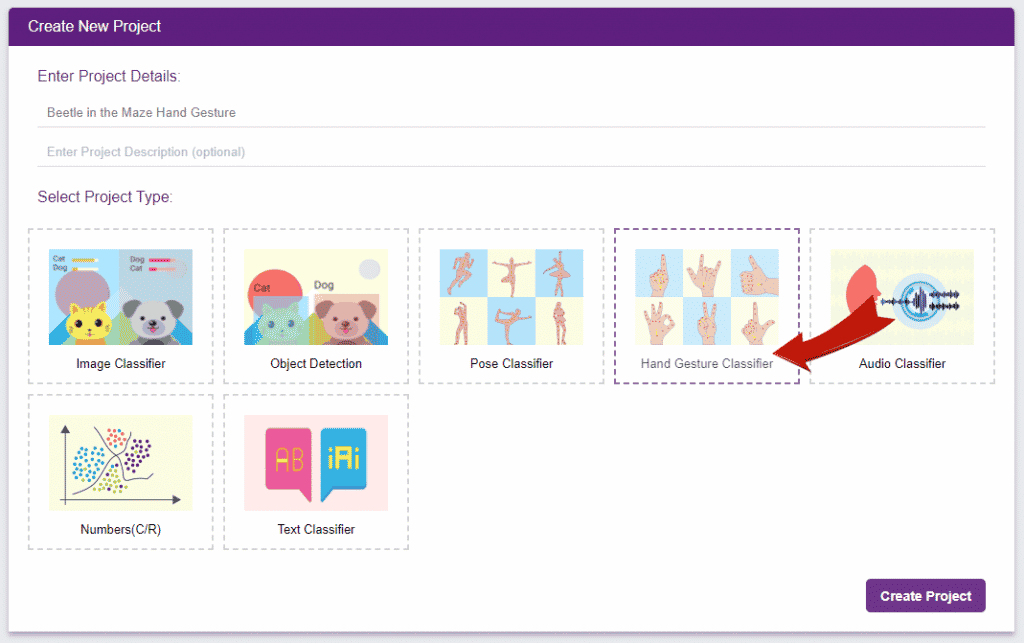

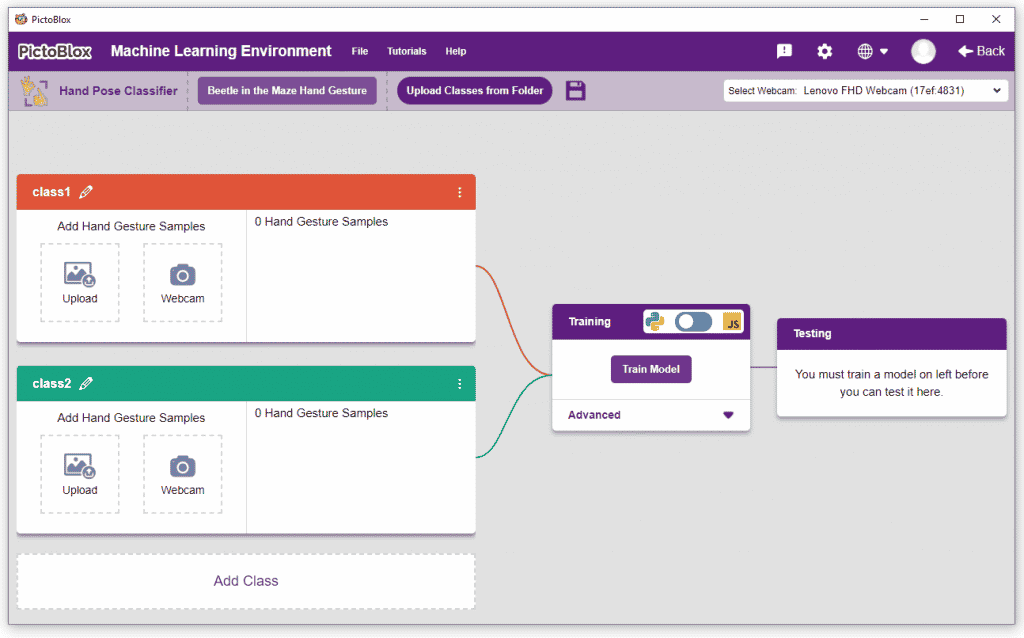

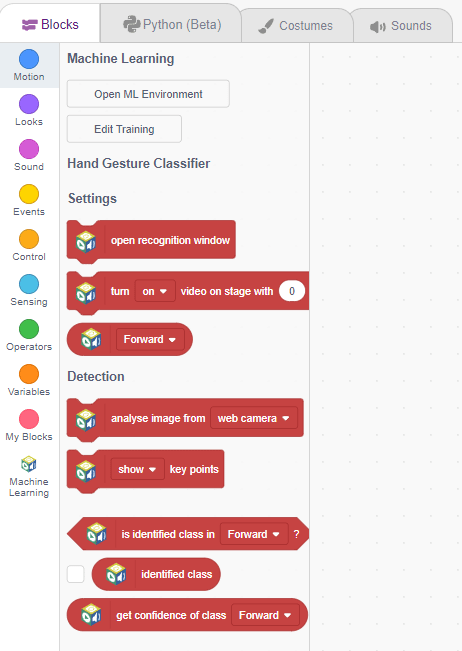

This project demonstrates how to use Machine Learning Environment to make a machine–learning model that identifies hand gestures and makes the Mars Rover move accordingly.

We are going to use the Hand Classifier of the Machine Learning Environment. The model works by analyzing your hand position with the help of 21 data points.

Follow the steps below:

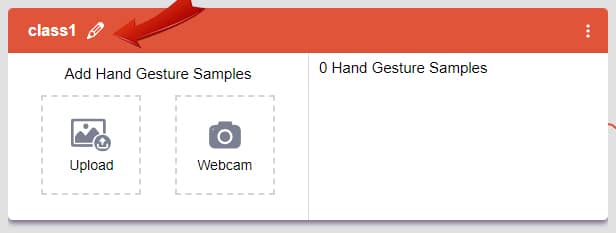

There are 2 things that you have to provide in a class:

You can perform the following operations to manipulate the data into a class.

After data is added, it’s fit to be used in model training. In order to do this, we have to train the model. By training the model, we extract meaningful information from the hand pose, and that in turn updates the weights. Once these weights are saved, we can use our model to make predictions on data previously unseen.

The accuracy of the model should increase over time. The x-axis of the graph shows the epochs, and the y-axis represents the accuracy at the corresponding epoch. Remember, the higher the reading in the accuracy graph, the better the model. The range of the accuracy is 0 to 1.

To test the model, simply enter the input values in the “Testing” panel and click on the “Predict” button.

The model will return the probability of the input belonging to the classes.

Click on the “Export Model” button on the top right of the Testing box, and PictoBlox will load your model into the Block Coding Environment if you have opened the ML Environment in the Block Coding.

The Mars Roverwill move according to the following logic: