Introduction

A Sound-Based Quadruped with Machine Learning refers to a Quadruped robot that can perceive and interact with its environment through sound-based sensing and uses machine-learning techniques to process and analyze the auditory data it receives.

Quadruped robots with machine learning have the potential to greatly enhance the way we interact with machines and each other, making communication more natural and intuitive while also enabling new applications in fields such as healthcare, education, and entertainment.

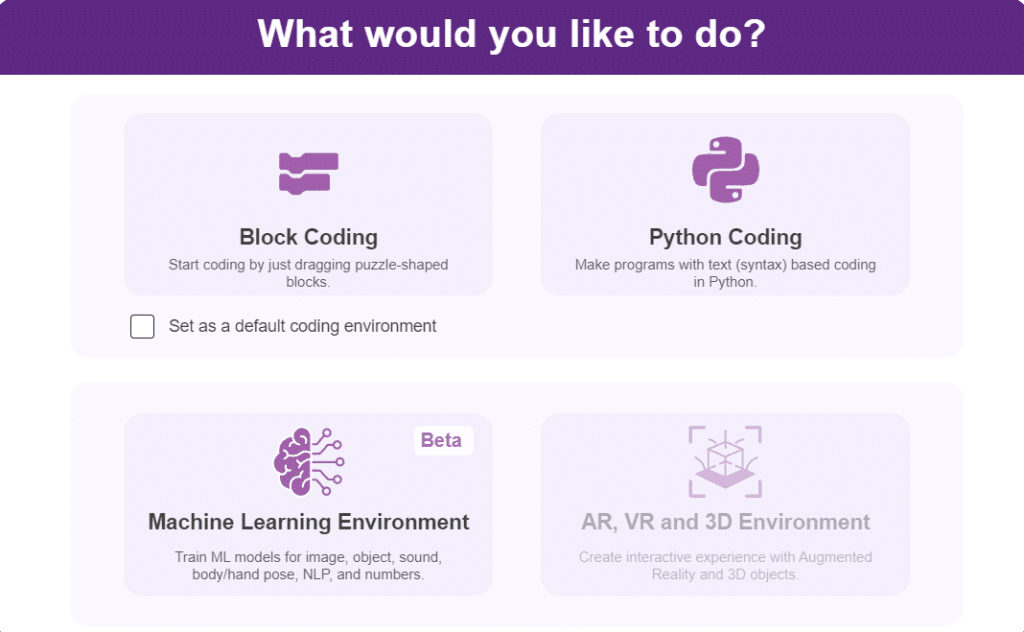

In this activity, we will use the Machine Learning Environment of the Pictoblox Software. We will use the Audio Classifier of the Machine Learning Environment and create our custom sounds to control the Quadruped.

Audio Classifier Workflow

Follow the steps below to create your own Audio Classifier Model:

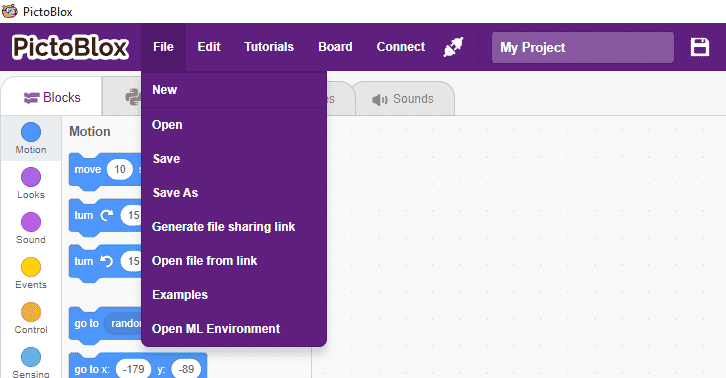

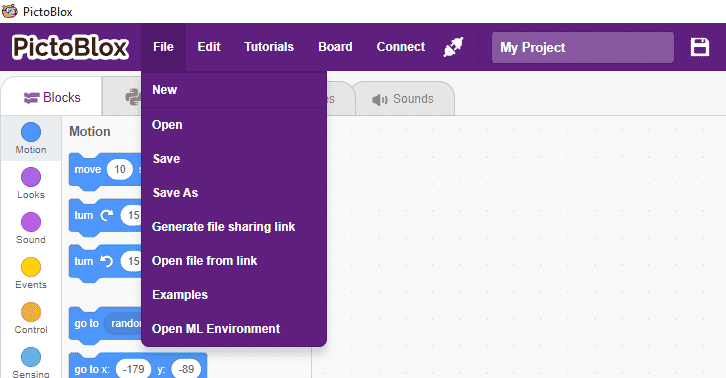

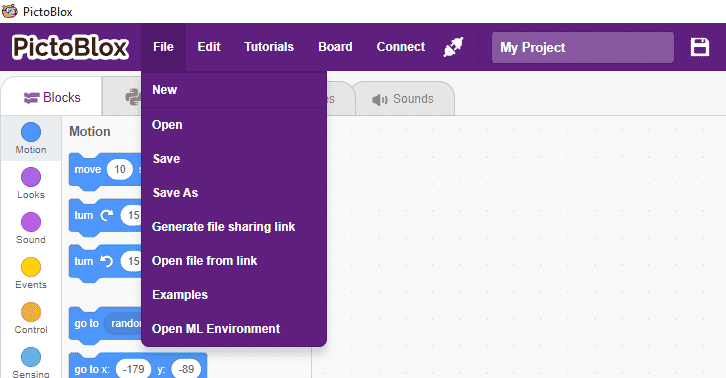

- Open PictoBlox and create a new file.

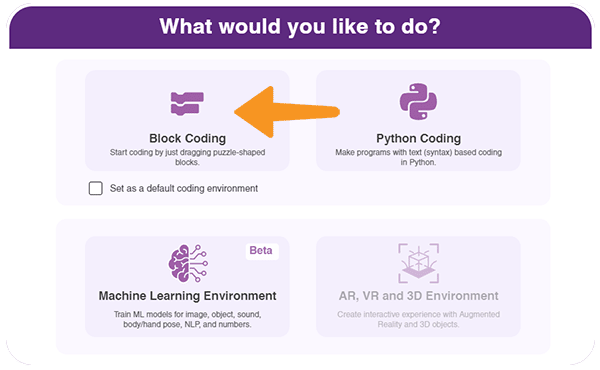

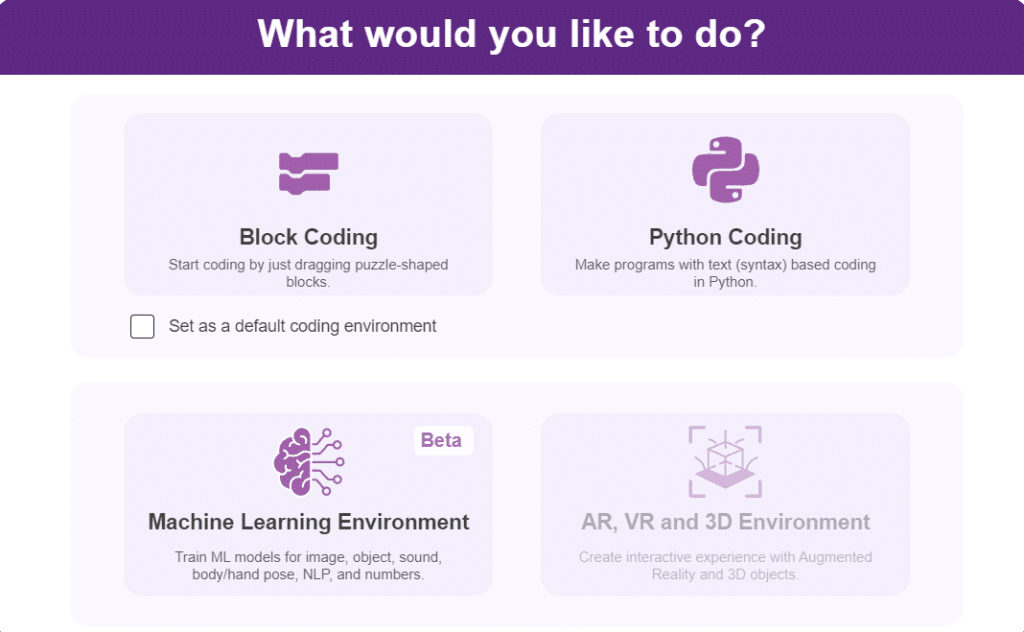

- Select the Block coding environment as the appropriate Coding Environment.

- Select the “Open ML Environment” option under the “Files” tab to access the ML Environment.

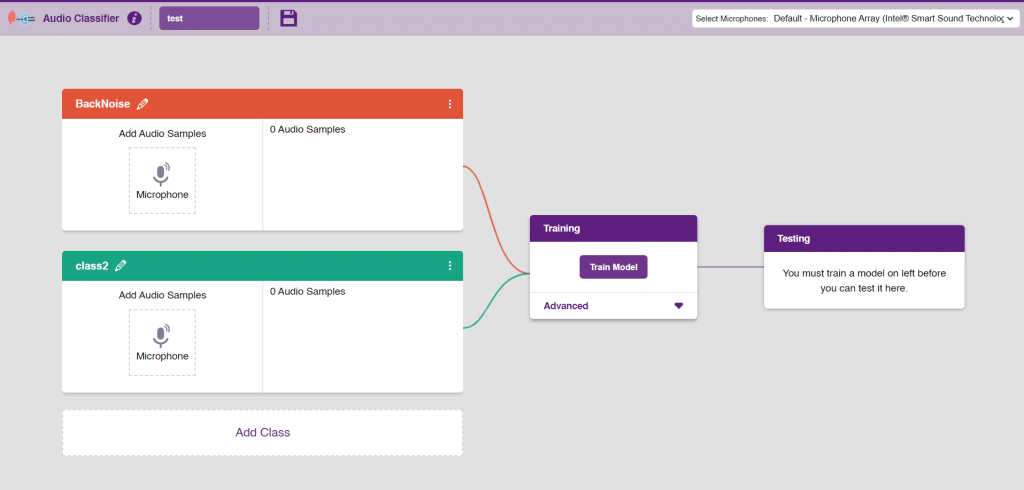

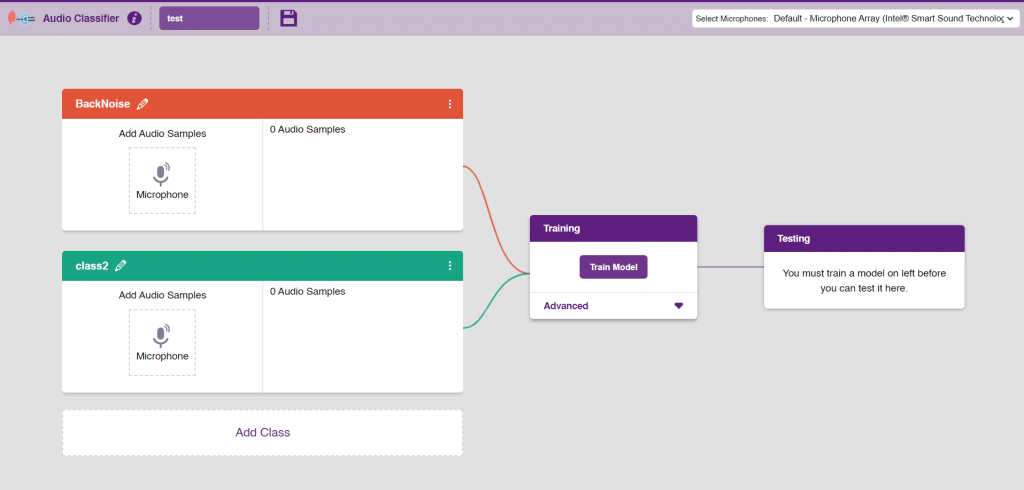

- A new window will open. Type in an appropriate project name of your choice and select the “Audio Classifier” extension. Click the “Create Project” button to open the Audio Classifier Window.

- You shall see the Classifier workflow with two classes already made for you. Your environment is all set. Now it’s time to upload the data.

- As you can observe in the above image, we will add two classes for audio. We will be able to add audio samples with the help of the microphone. Rename class 1 as “Clap” and class 2 as “Snap”.

Note: You can add more classes to the projects using the Add Class button.

Adding Data to Class

You can perform the following operations to manipulate the data into a class.

- Naming the Class: You can rename the class by clicking on the edit button.

- Adding Data to the Class: You can add the data using the Microphone.

- You will be able to add the audio sample in each class and make sure you add at least 20 samples for the model to run with good accuracy.

- Add the first class as “clap” and record the audio for clap noises through the microphone.

- Add the second class as “snap” and record the audio for snap noises through the microphone.

Note: You will only be able to change the class name in the starting before adding any audio samples. You will not be able to change the class name after adding the audio samples in the respective class.

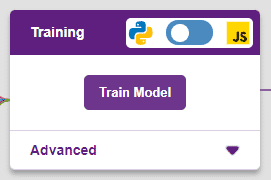

Training the Model

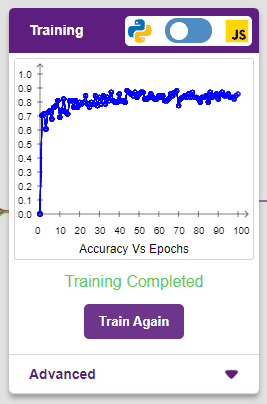

After data is added, it’s fit to be used in model training. To do this, we have to train the model. By training the model, we extract meaningful information from the hand pose, and that in turn updates the weights. Once these weights are saved, we can use our model to make predictions on data previously unseen.

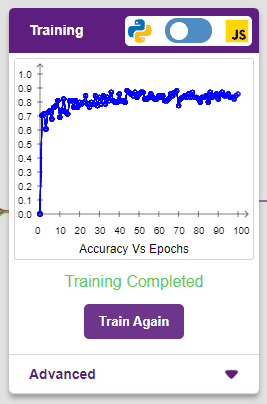

The accuracy of the model should increase over time. The x-axis of the graph shows the epochs, and the y-axis represents the accuracy at the corresponding epoch. Remember, the higher the reading in the accuracy graph, the better the model. The range of accuracy is 0 to 1.

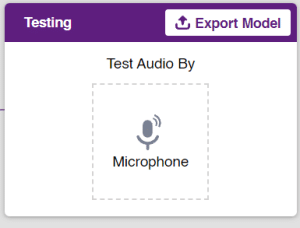

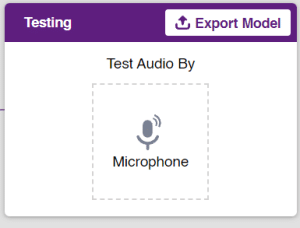

Testing the Model

To test the model simply, use the microphone directly and check the classes as shown in the below image:

You will be able to test the difference in audio samples recorded from the microphone as shown below:

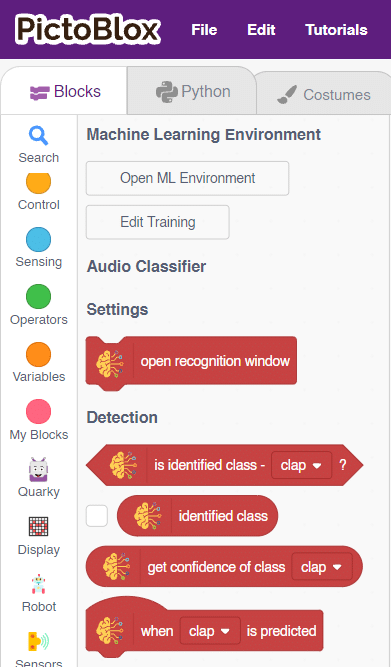

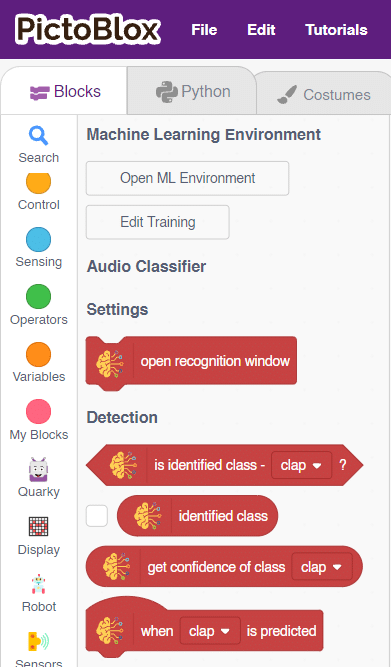

Export in Block Coding

Click on the “Export Model” button on the top right of the Testing box, and PictoBlox will load your model into the Block Coding Environment if you have opened the ML Environment in the Block Coding.

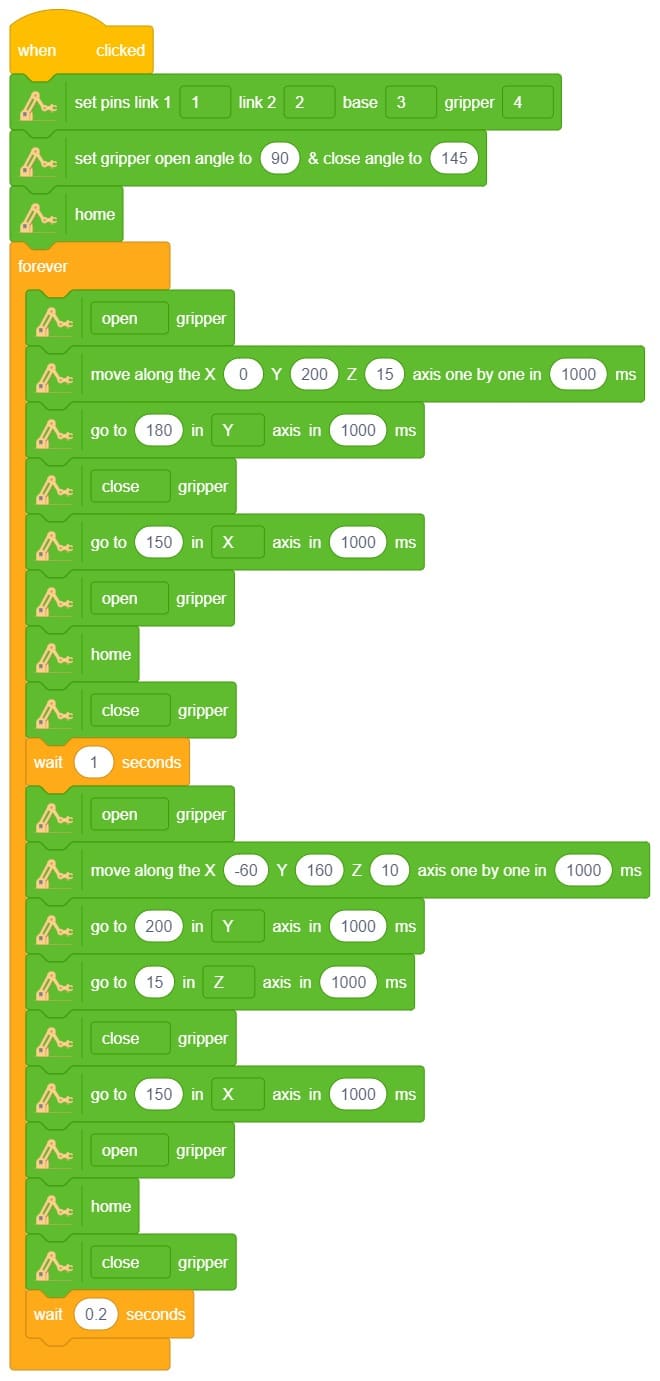

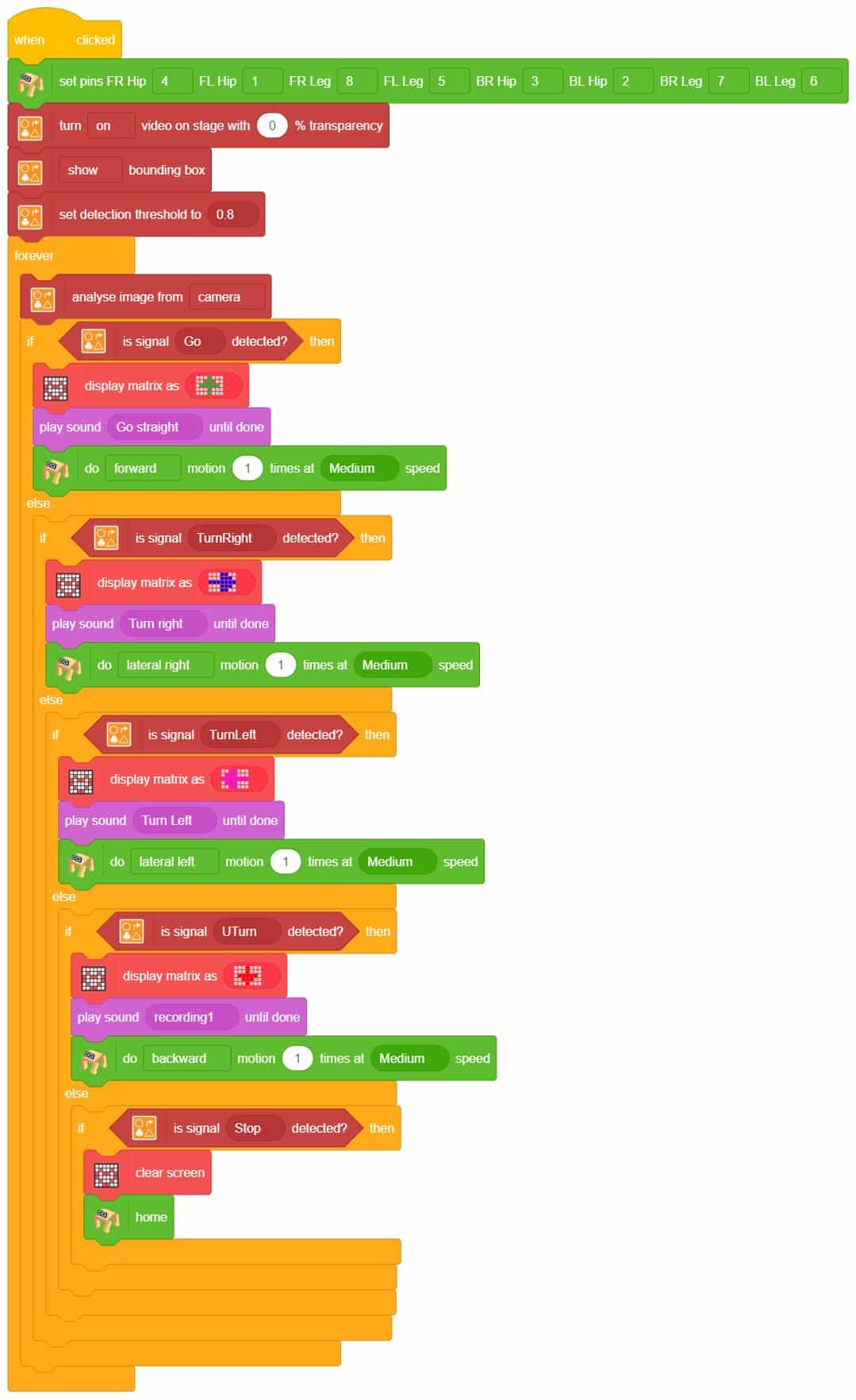

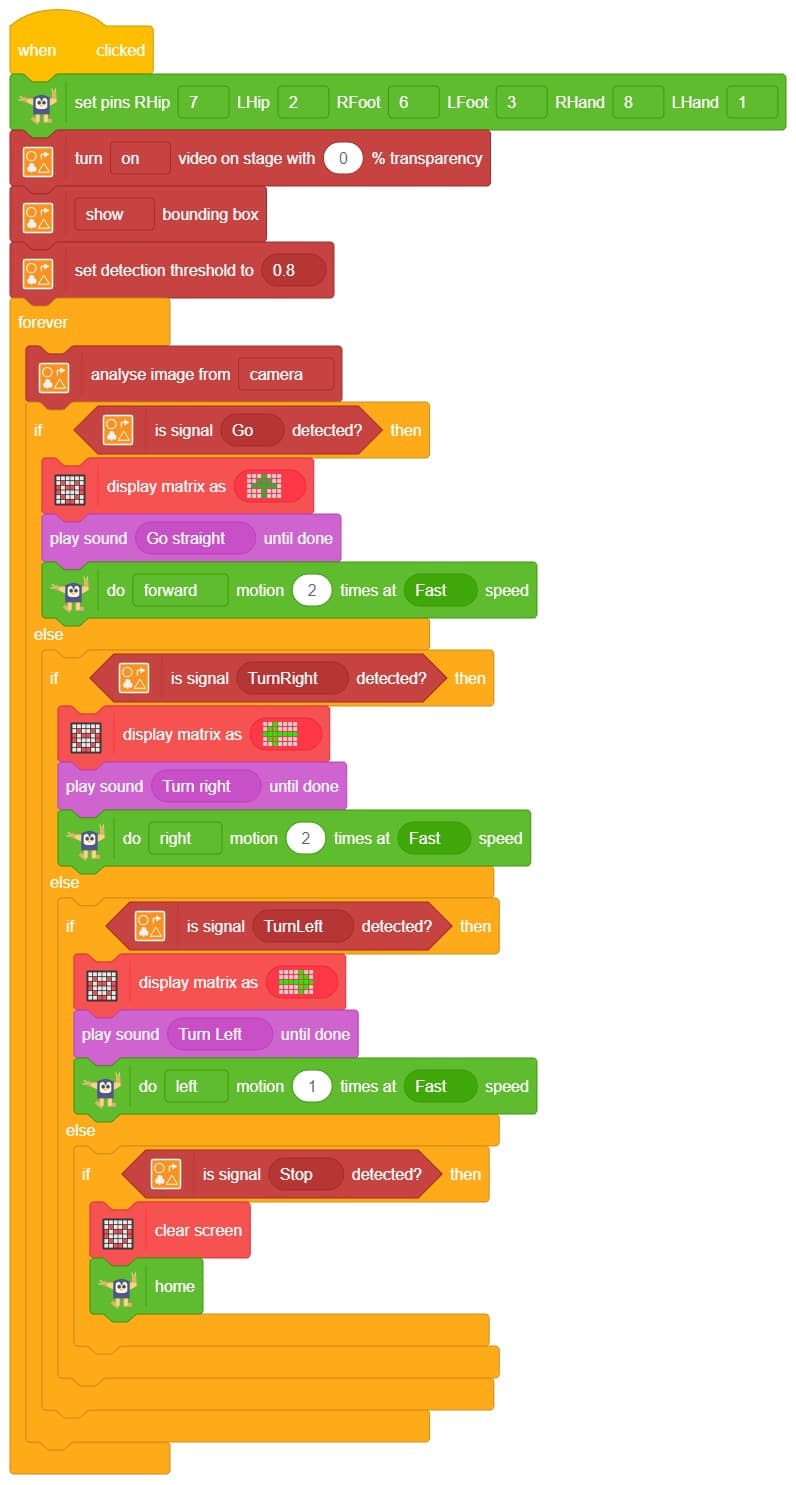

The Quadruped will move according to the following logic:

- When the audio is identified as “clap” sound– Quadruped will move forward.

- When the “snap” sound is detected –Quadruped will move backward.

Note: You can add even more classes with different types of differentiating sounds to customize your control. This is just a small example from which you can build your own Sound Based Controlled Quadruped in a very easy stepwise procedure.

Code

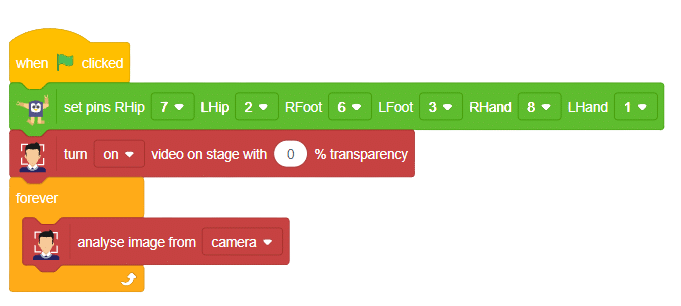

Logic

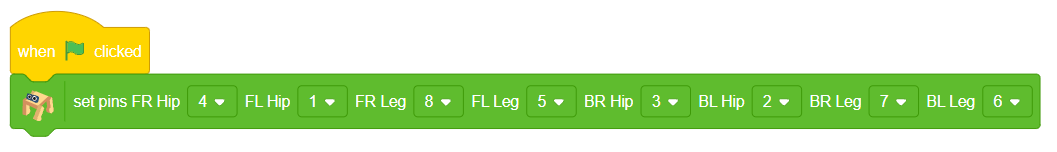

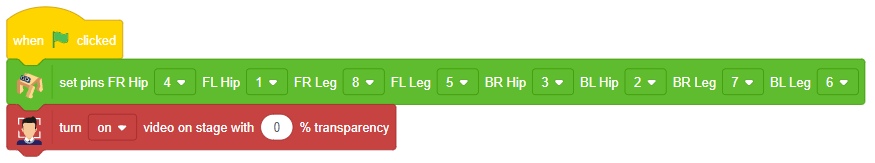

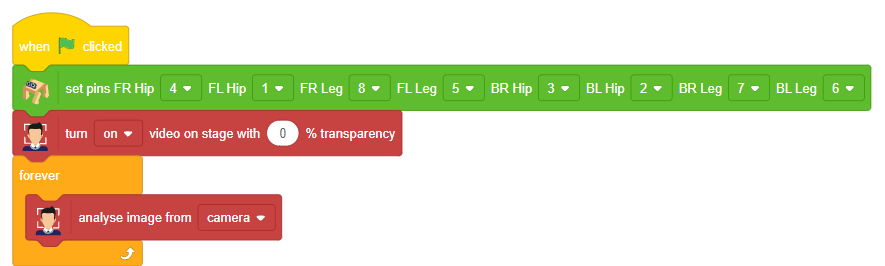

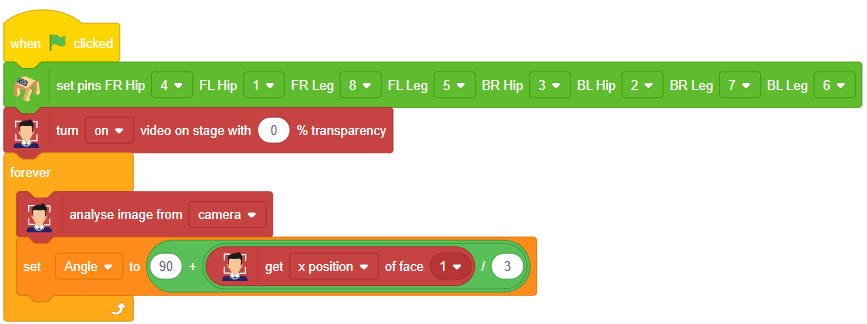

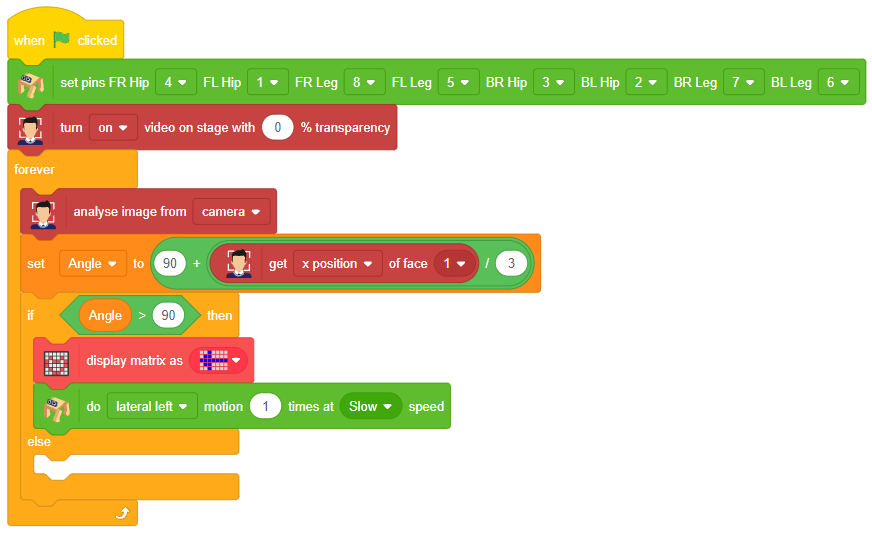

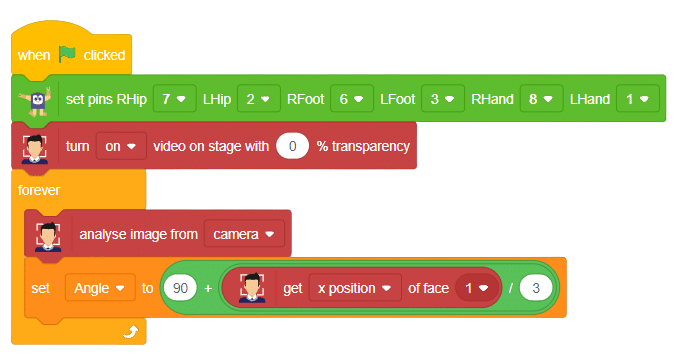

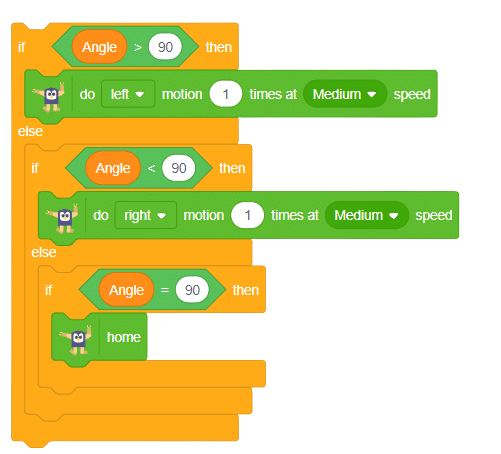

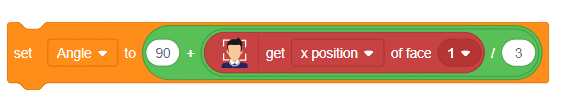

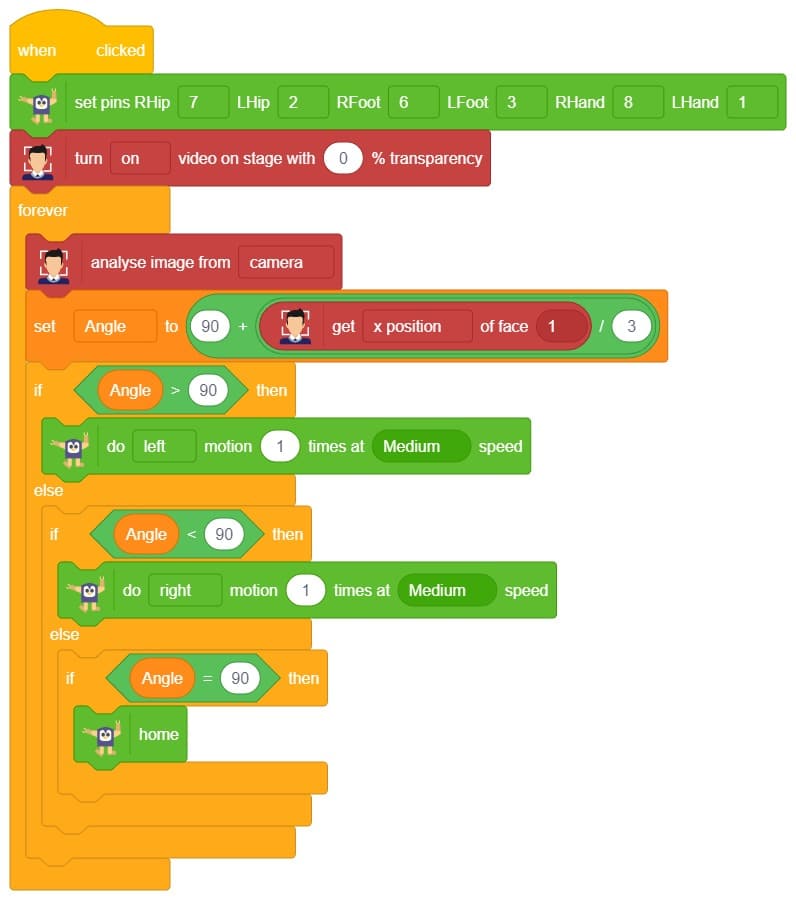

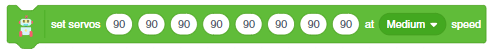

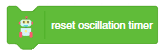

- First, initialize the Quadruped extension.

- Then, initialize a forever loop to continuously loop and analyze the camera from the stage.

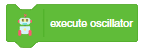

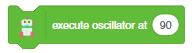

- If the program detects a clap sound, the Quadruped will move forward at a specific speed.

- Similarly, if it identifies a snap sound, the Quadruped will move backward at a specific speed.

- Otherwise, the Quadruped will remain in its initial position (home position).

Output