Tutorial Video

Introduction to Image Classifier

The Image Classifier of the PictoBlox Machine Learning Environment is used for classifying images into different classes based on their characteristics.

For example, let’s say you want to construct a model to judge if a person is wearing a mask correctly or not, or if the person’s wearing one at all. You’ll have to classify your image into three classes:

- Wearing mask

- Not wearing mask

- Wearing mask incorrectly

This is the case of image classification where you want the machine to label the images into one of the classes.

In this tutorial, we’ll learn how to construct the ML model using the PictoBlox Image Classifier.

These are the steps involved in the procedure:

- Setting up the environment

- Gathering the data (Data collection)

- Training the model

- Testing the model

- Exporting the model to PictoBlox

- Creating a script in PictoBlox

Setting up the Environment

First, we need to set the ML environment for image classification.

Follow the steps below:

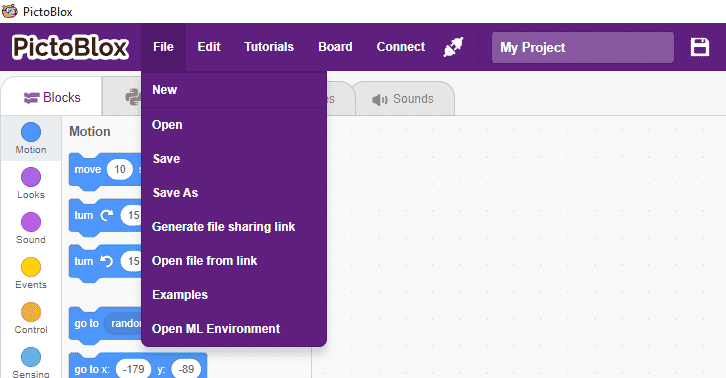

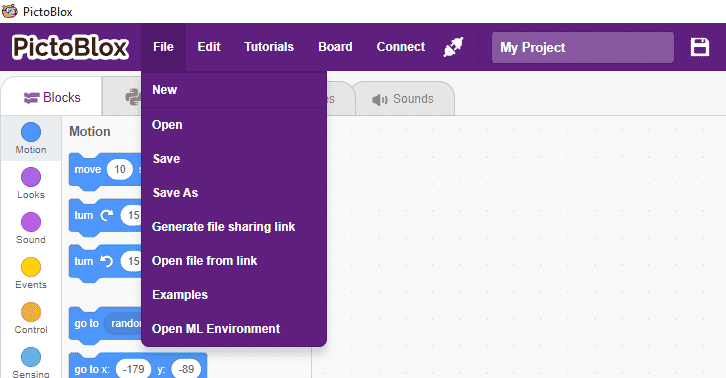

- Open PictoBlox and create a new file.

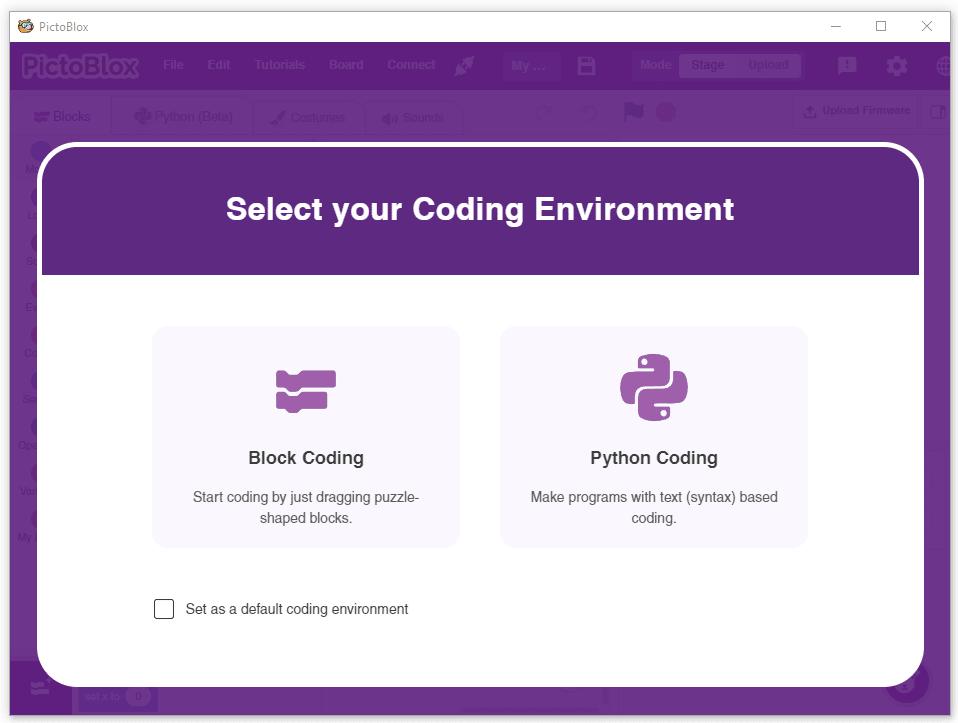

- Select the coding environment as Block Coding.

- To access the ML Environment, select the “Open ML Environment” option under the “Files” tab.

- You’ll be greeted with the following screen.

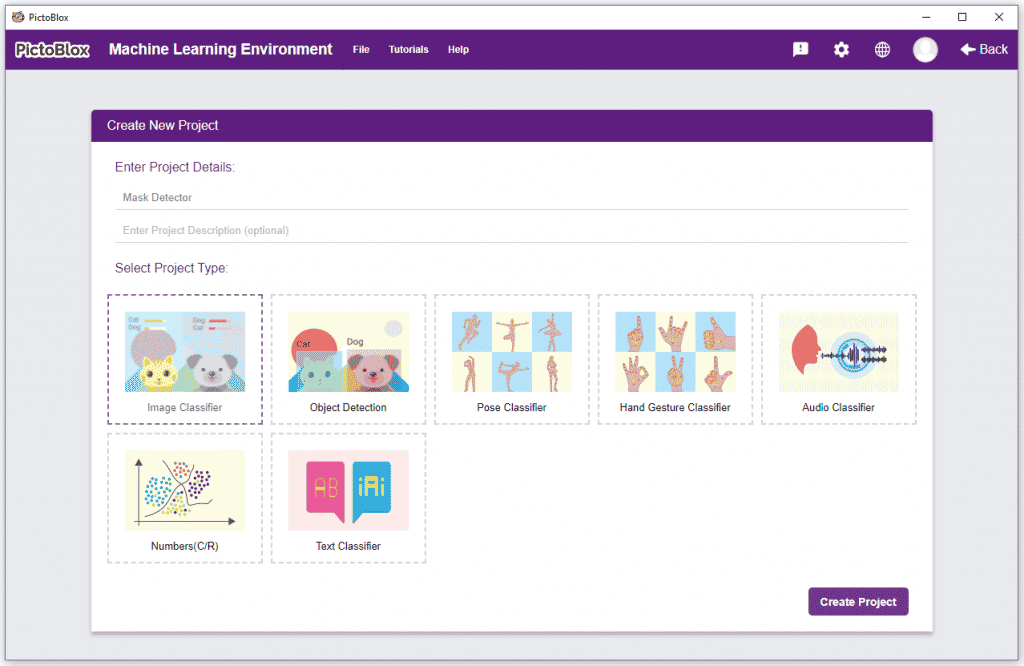

Click on “Create New Project“.

Click on “Create New Project“. - A window will open. Type in a project name of your choice and select the “Image Classifier” extension. Click the “Create Project” button to open the Image Classifier window.

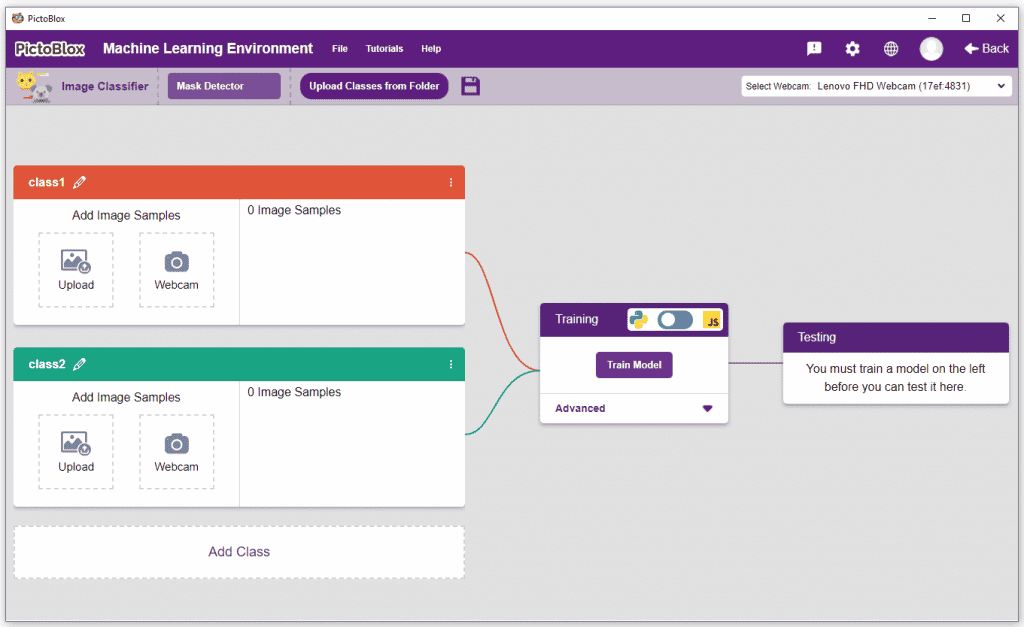

- You shall see the Image Classifier Workflow with two classes already made for you. Your environment is all set. Now it’s time to upload the data.

Collecting and Uploading the Data

Class is the category in which the Machine Learning model classifies the images. Similar images are put in one class.

There are 2 things that you have to provide in a class:

- Class Name

- Image Data: This data can either be taken from the webcam or by uploading from local storage or from google drive.

For this project, we’ll be needing three classes:

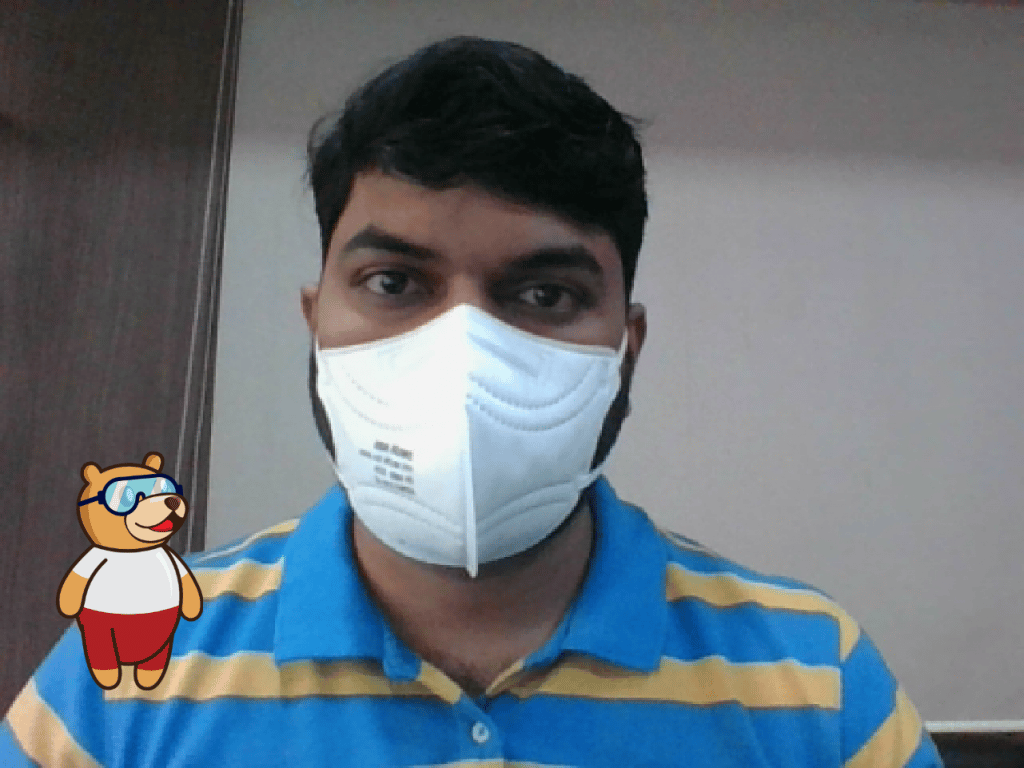

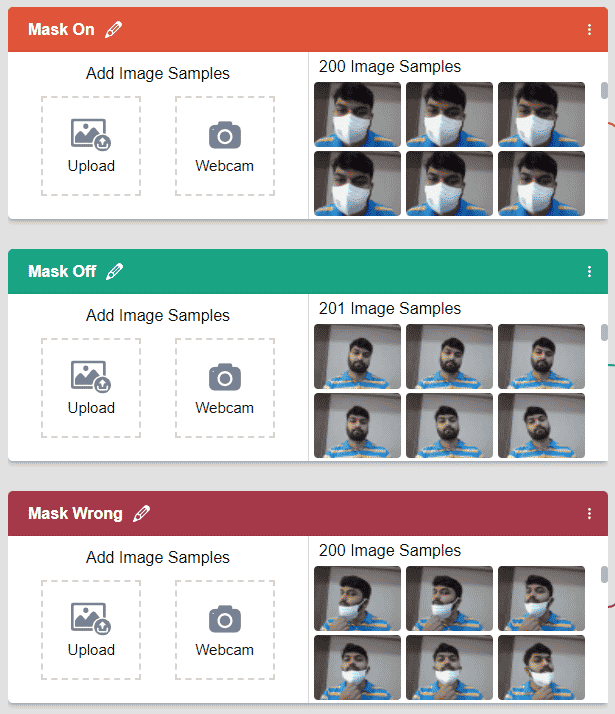

- Wearing mask – Mask On

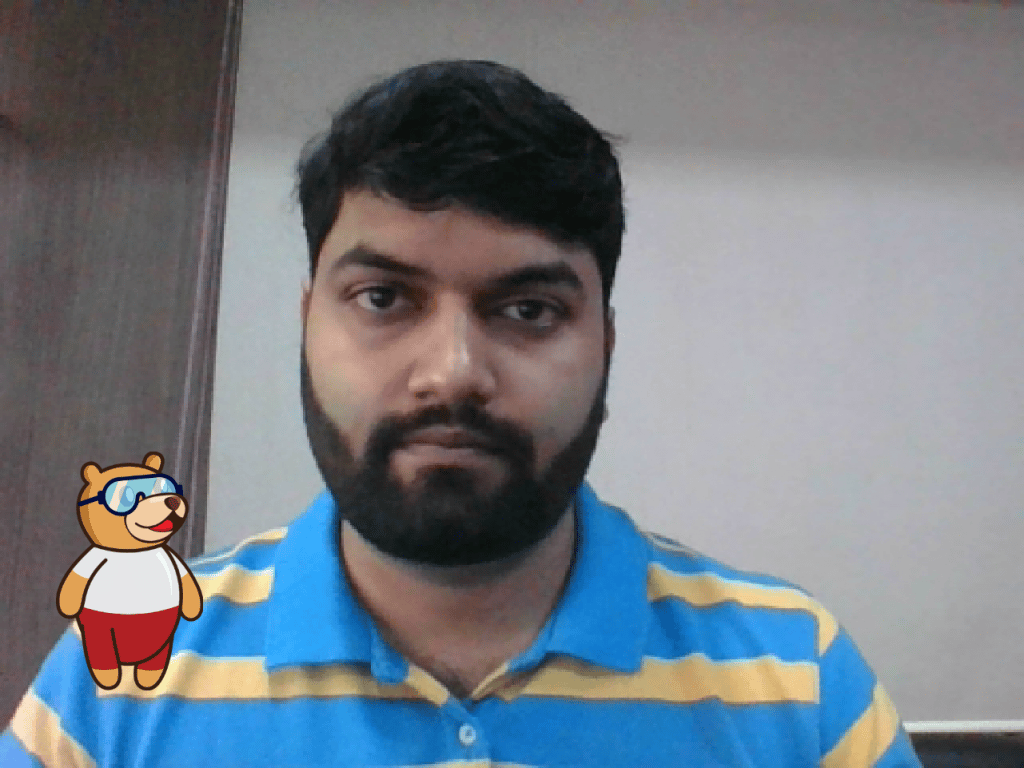

- Not wearing a mask – Mask Off

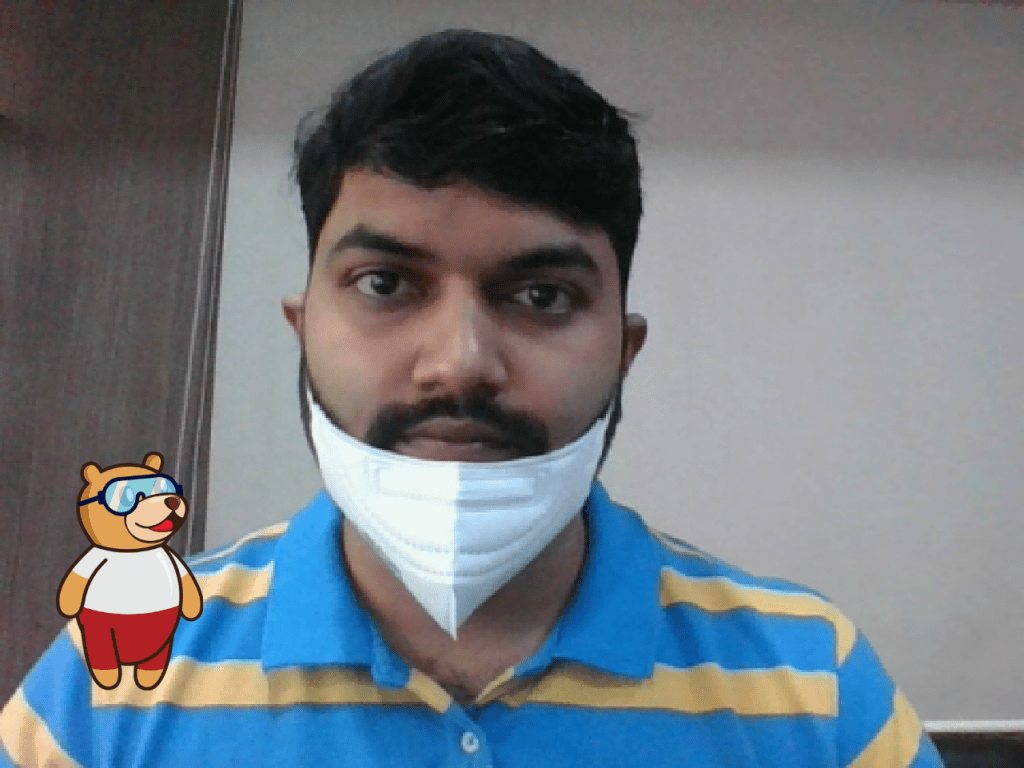

- Wearing mask incorrectly – Mask Wrong

Follow the steps to upload the data for the classes:

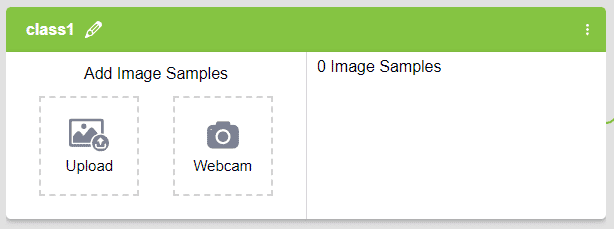

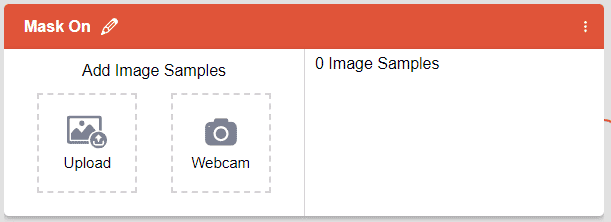

- Rename the first class name Mask On.

- Click the Webcam button.

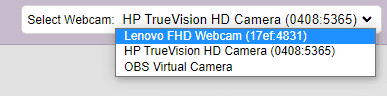

If you want to change your camera feed, you can do it from the webcam selector in the top right corner.

- Next, click on the “Hold to Record” button to capture the without mask images. Take 200 photos with different head orientations.

If you want to delete any image, then hover over the image and click on the delete button.

If you want to delete any image, then hover over the image and click on the delete button. Once uploaded, you will be able to see the images in the class.

Once uploaded, you will be able to see the images in the class. - Rename Class 2 as Mask Off and take the samples from the webcam.

- Click the “Add Class” button, and you shall see a new class in your Environment. Rename the class name to Mask Wrong.

Note: You must add at least 20 samples to each of your classes for your model to train. More samples will lead to better results.

Note: You must add at least 20 samples to each of your classes for your model to train. More samples will lead to better results.

As you can see, now each class has some data to derive patterns from. In order to extract and use these patterns, we must train our model.

Training the Model

Now that we have gathered the data, it’s time to teach our model how to classify new, unseen data into these three classes. In order to do this, we have to train the model. By training the model, we extract meaningful information from the images, and that in turn updates the weights. Once these weights are saved, we can use our model to make predictions on data previously unseen.

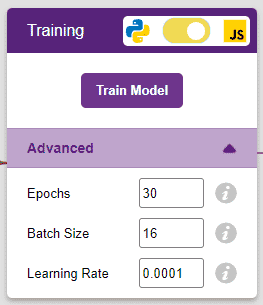

However, before training the model, there are a few hyperparameters that you should be aware of. Click on the “Advanced” tab to view them.

There are three hyperparameters you can play along with here:

- Epochs– The total number of times your data will be fed through the training model. Therefore, in 10 epochs, the dataset will be fed through the training model 10 times. Increasing the number of epochs can often lead to better performance.

- Batch Size– The size of the set of samples that will be used in one step. For example, if you have 160 data samples in your dataset, and you have a batch size of 16, each epoch will be completed in 160/16=10 steps. You’ll rarely need to alter this hyperparameter.

- Learning Rate– It dictates the speed at which your model updates the weights after iterating through a step. Even small changes in this parameter can have a huge impact on the model performance. The usual range lies between 0.001 and 0.0001.

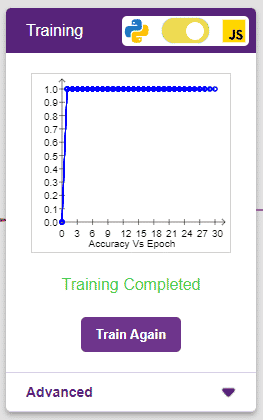

Let’s train the model with the standard hyperparameters and see how it performs. You can train the model in both JavaScript and Python. In order to choose between the two, click on the switch on top of the Training box.

We’ll be training this model in JavaScript. Click on the “Train Model” button to commence training. We need not change any hyperparameter for this model.

The model shows great results! Remember, the higher the reading in the accuracy graph, the better the model. The x-axis of the graph shows the epochs, and the y-axis represents the corresponding accuracy. The range of the accuracy is 0 to 1.

Testing the Model

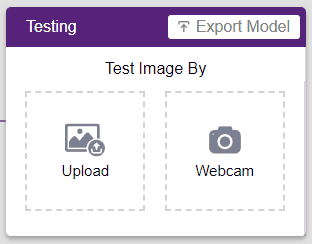

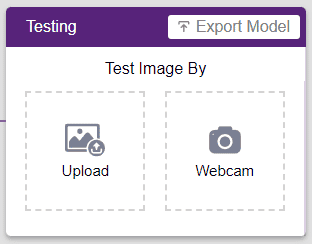

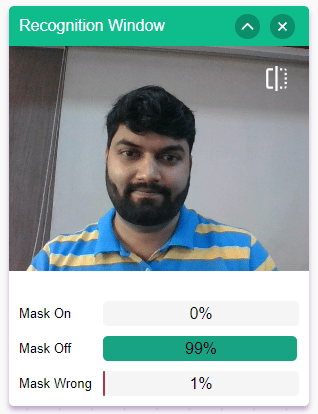

Now that the model is trained, let us see if it delivers the expected results. We can test the model by either using the device’s camera or by uploading an image from the device’s storage. Let’s use our webcam to start with.

Click on the “Webcam” option in the testing box and the model will start predicting based on the image in the window.

Great! The model is able to make predictions in real-time. Now close the window by clicking on the cross on the top right of the testing box.

Now we can export our model and make a project in the block coding environment.

Exporting the Model to the Block Coding Environment

Click on the “Export Model” button on the top right of the Testing box, and PictoBlox will load your model into the Block Coding Environment.

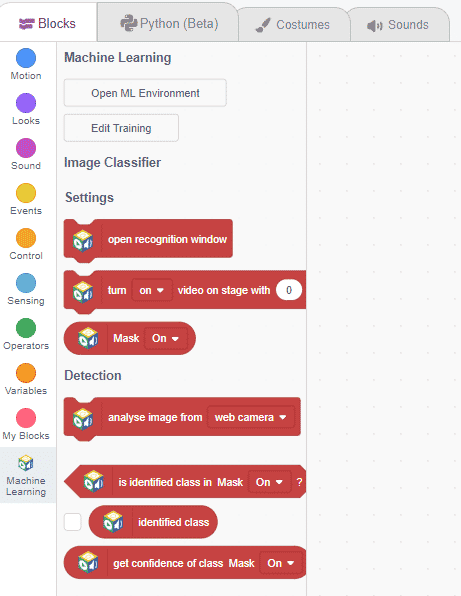

Observe how we have blocks pertaining to the model we just trained on the left side panel.

Each block here has a specific function:

- open recognition window: Opens a window that uses the device’s camera to make predictions.

- turn () video one stage with () transparency: This shows the video captured by the camera on the stage.

- analyse image from (): Analyze the image input.

- is identify class in (): Identifies if the analyzed image belongs to a certain class.

- get the confidence in class (): returns the confidence level of image classification. Can be used as a threshold value.

Script in the Block Coding Environment

Now let’s use our model in an actual project. To do so, we’ll be making use of our Block Coding Environment.

We’ll be making a script that uses our model to analyze an image from the device’s camera. Once that’s done, our sprite Toby will tell us if the person in the image is wearing a mask, wearing a mask incorrectly, or not wearing a mask at all.

Let’s begin!

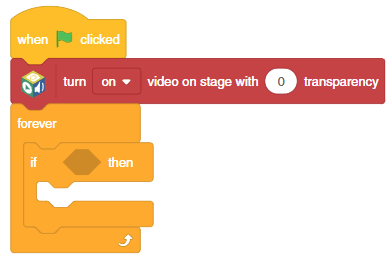

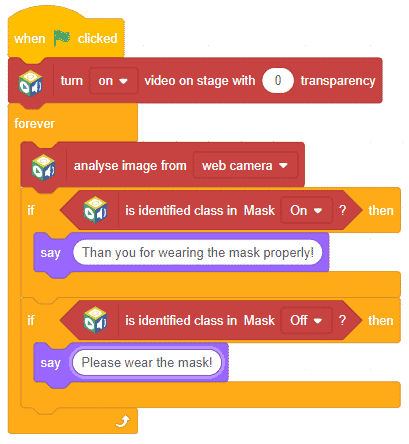

- Add the when flag clicked block and the forever block into the scripting area and snap them together.

- Add a turn () video on stage with () transparency block above the forever block. Select ON and 0 as transparency.

- Drag and drop the if the block inside forever block.

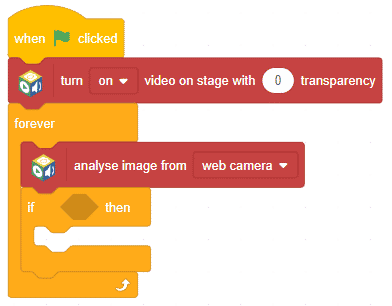

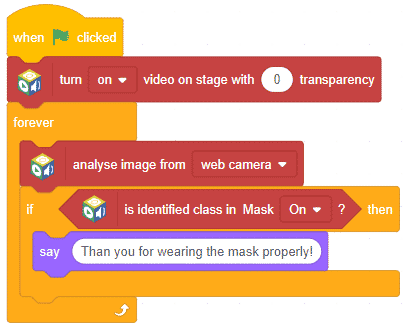

- Then add the analyse image from () block above the if () block and select web camera as feed.

- Then, add the is identified class is ()? block in condition space. Select the class as Mask ON.

- From the Looks palette, add a say () block inside if block. Write the message – Thank you for wearing the mask properly!

- Duplicate the if block and snap it below the first if block. Select the class as a Mask Off in the second is identified class is ()? block. Change the text in the say block to – Please wear the mask!

- Duplicate the if block and snap it below the first if block. Select the class as a Mask Wrong in the second is identified class from () is ()? block. Change the text in the say block to – Please wear the mask properly!

The script is complete. Click the green flag to run the script.

Save the project as Mask Detector.

There you have it! You just used image classification to make your very own mask detection project.